The Sri Lanka monk case shocked many people…

Introduction — When a System Quietly Breaks

At first, nothing feels wrong.

A plan works for a while.

Decisions seem justified.

Small compromises feel harmless.

And then—something breaks.

That’s why the recent case out of Sri Lanka caught global attention.

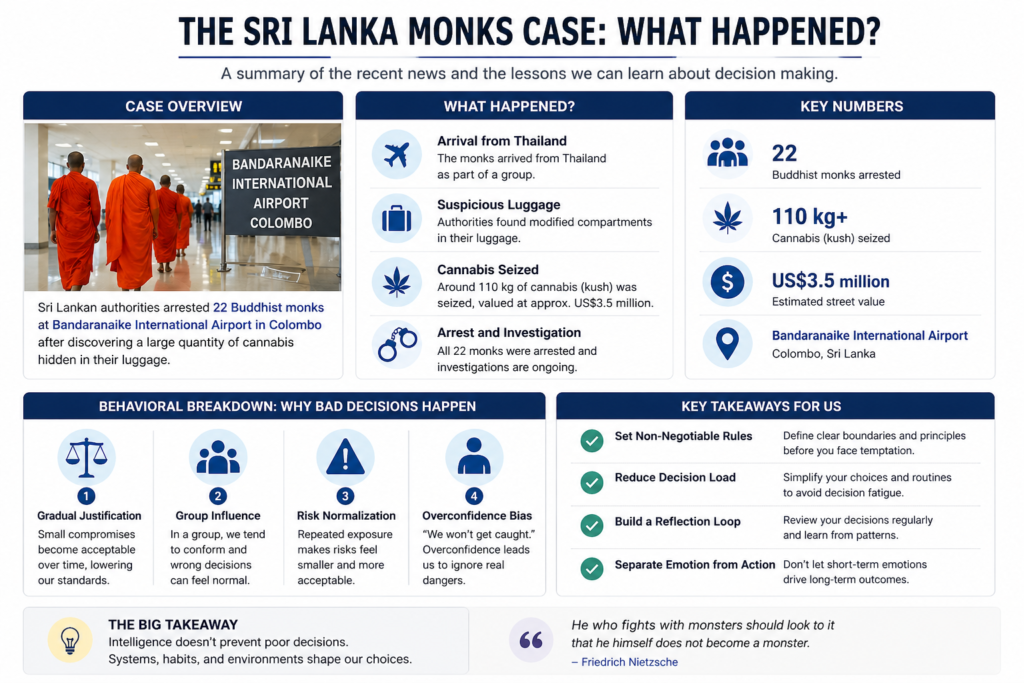

According to multiple international news reports, a group of Buddhist monks were arrested at Bandaranaike International Airport in Colombo after authorities discovered large quantities of cannabis concealed in luggage—allegedly hidden within modified compartments. The total value reported by outlets ranged in the multi-million-dollar range, and the scale of the operation suggested coordination rather than a one-off mistake.

This wasn’t just another crime story.

👉 It raises a deeper question:

How do people in positions of trust end up making decisions like this?

The Wrong Question Most People Ask

The common reaction is predictable:

- “Why would they do something like that?”

- “That makes no sense.”

But these questions don’t get us very far.

👉 The better question is:

“How did this decision become possible?”

Because once you understand the process, you start to see how often it appears in ordinary life.

Intelligence Is Not a Safeguard

We tend to believe that intelligence—or status—protects people from poor judgment.

It doesn’t.

Research in behavioral science has shown that decision-making is heavily influenced by cognitive biases and heuristics, not just logic. As psychologist Daniel Kahneman explains, much of human judgment operates through fast, intuitive thinking that is efficient but error-prone.

In practice, that means:

- People underestimate risk

- Overestimate control

- Rationalize questionable choices

👉 Being “smart” doesn’t eliminate these patterns. It often just hides them better.

What Likely Happened — A Behavioral Breakdown

Viewed through a behavioral lens, cases like this rarely begin with a single extreme decision.

They evolve.

1) Gradual Justification

Most major mistakes start small.

A minor compromise becomes acceptable.

Then another.

And another.

👉 Over time, the internal standard shifts.

What once felt wrong begins to feel “reasonable.”

2) Group Influence

When decisions are made within a group, individual judgment changes.

Classic experiments by Solomon Asch demonstrated that people often conform to group opinion—even when it is clearly incorrect.

👉 In a group, wrong decisions can start to feel normal.

3) Risk Normalization

Repeated exposure reduces perceived danger.

Something that initially feels risky becomes routine.

This is known as normalization of deviance—a pattern studied in organizational failures where risky behavior becomes accepted simply because it hasn’t caused immediate consequences.

👉 The risk doesn’t disappear.

👉 The perception of risk does.

4) Overconfidence Bias

At some point, a critical belief takes hold:

👉 “We won’t get caught.”

This isn’t logic. It’s bias.

And it shows up in almost every major decision failure—from financial scandals to operational breakdowns.

A Necessary Critique — This Was Avoidable

It’s important to be clear:

👉 This wasn’t just an unfortunate mistake.

- The risks were obvious

- The stakes were high

- The pattern suggests repeated decisions, not a single lapse

👉 This was a systemic failure of judgment.

And that matters, because it means:

👉 It could have been prevented.

Why This Matters Beyond the News

It’s easy to distance ourselves from a story like this.

But the structure is familiar.

We all do versions of this:

- “Just this once”

- “It’s not a big deal”

- “I can handle it”

👉 Over time, small decisions compound.

Not into headlines—but into habits, outcomes, and trajectories.

The System Behind Better Decisions

Better decisions don’t come from stronger willpower.

👉 They come from better systems.

1) Define Non-Negotiable Rules

Decide in advance what you will not do.

Clear boundaries reduce the need for real-time judgment.

2) Reduce Decision Load

More choices = more errors.

Simple systems outperform complex ones because they reduce friction.

3) Build a Reflection Loop

At the end of each day or week, ask:

- What decisions did I make?

- What influenced them?

- What should change?

👉 Awareness creates adjustment.

4) Separate Emotion from Action

Emotions fluctuate.

Decisions shouldn’t.

Systems allow you to act consistently, even when your internal state changes.

A Line Worth Remembering

Philosopher Friedrich Nietzsche once wrote:

“He who fights with monsters should look to it that he himself does not become a monster.”

The danger isn’t always external.

👉 It’s in the gradual shift of our own standards.

Conclusion

The Sri Lanka airport case is not just a news story.

👉 It’s a case study in how decisions fail.

The takeaway is simple:

- Intelligence doesn’t prevent poor judgment

- Repetition changes what feels acceptable

- Systems shape outcomes

And ultimately:

👉 Decisions are not random. They are structured.

If you want better results,

you don’t just need better thinking.

👉 You need a system that makes the right decision easier than the wrong one.

If you want to understand how broken systems lead to repeated mistakes, you can read a deeper breakdown here.